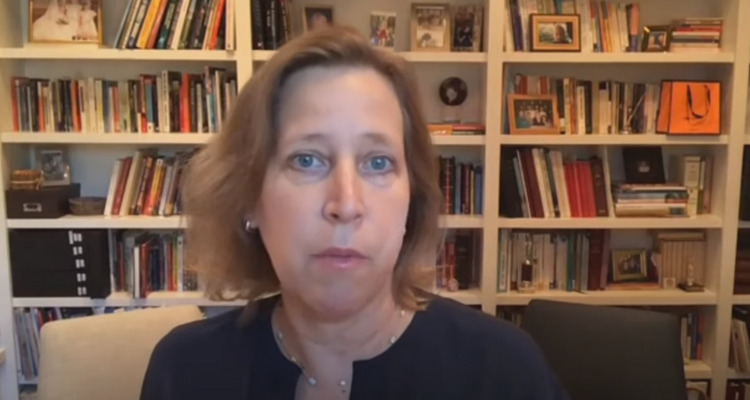

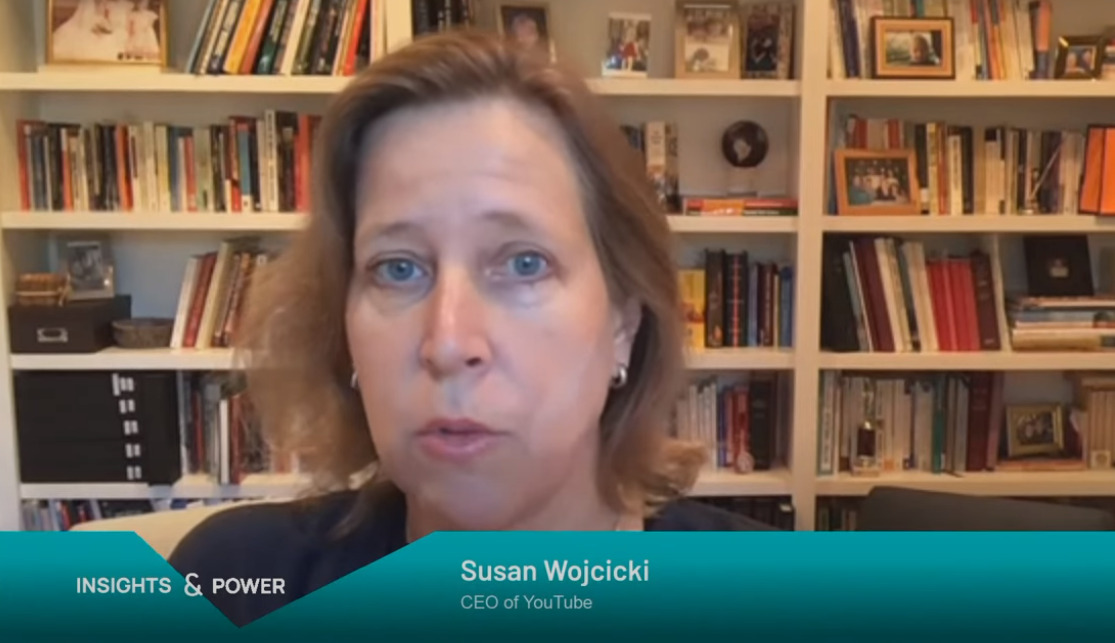

YouTube CEO Susan Wojcicki Advocates For Governments To Pass Laws Restricting Speech So YouTube Can Enforce Them

In a recent interview, YouTube CEO Susan Wojcicki advocated for governments to pass new laws restricting speech so that she and YouTube can enforce them.

Source: TIDETVhamburg YouTube

Wojcicki’s anti-free speech stance came during an appearance on TIDETV Hamburg’s YouTube channel as part of their A Distinguished Conversation Series, which is moderated by Dr. Mattias C. Kettemann. Wojcicki was joined by Dr. Wolfgang Schulz the Director of the Leibniz Institute for Media Research.

During the discussion, Wojcicki revealed that YouTube rolled out an entirely 100% machine automated content moderation system.”

She stated, “Within a couple of weeks, we actually built a system to move a hundred percent to machines because we knew the pandemic was coming, we knew that we were going to have to lose our workers, and in a couple of weeks we built this 100% automated system to manage content moderation.”

Source: Age of Ultron #2

From there Wojcicki described how their content moderation system previously worked before they switched to 100% automation, “We use our machines basically to go out and cast a new and identity what is content that could be problematic. And then we use the human reviewers to say, ‘Yes, it it is problematic, or no, it’s not.'”

“There adult content, for example, that’s very easy to identity with machines, but something hate and harassment has a lot more nuance and context and could be mixed with political speech. And that’s where we would want to have humans spend more time understanding the context to make the right decisions,” she added.

Source: Age of Ultron #2

She then described how their 100% automated system works, “Basically, we built the system that worked entirely with machines and we used the small set of full-time employees to handle the appeals. When people said, ‘No, you made a mistake. Can you check?’ So, we used our full-time employees for that.”

She went on to admit this strategy did not work out as well as they had planned as they made “mistakes” and possibly crushed numerous small businesses.

With those “mistakes” being made, Wojcicki says they’ve “gone back to using a combination of machines and people.”

Source: The Amazing Spider-Man #537

After briefly detailing how their content moderation system works, Kettemann asked her, “You’ve mentioned the important position that YouTube has in how to deal with content whether to downrank it, to demonetize it — depending on the jurisdiction you’re in, in some countries like Germany courts have taken a rather strong position on the limits of what platforms can do whether they must not act arbitrarily or that they have to stick to their standards and implement them.”

He added, “So how does that work in practice? Perhaps you can elaborate a bit on that. How do you make sure that in light of the many different jurisdictions you have navigate this minefield between national jurisdictions between keeping advertisers happy, between keeping users interested?”

Source: Age of Ultron #4

In response Wojcicki stated, “First of all, I’ll say that we work around the globe and you’re right certainly there are many different laws and many different jurisdictions and we enforce the laws of the various jurisdictions around speech or what’s considered safe or not safe. That’s true for democratically elected governments — it might get a little bit more complicated in non-democratically governments. So basically we enforce those laws.”

“That actually hasn’t been the controversial part. What has been the controversial part has been when there is content that would be deemed as harmful, but yet, is not illegal. An example of that, for example, would be Covid. I’m not aware of there being laws by government around Covid in terms of not being able to debate the efficacy of masks or where the virus came from or the right treatment or proposal,” she stated.

But yet there was a lot of pressure and concern about us distributing misinformation that went against what was considered the standard and accepted medical knowledge. So, this category of harmful, but legal has been I think where most of the discussion has been,” Wojcicki added.

Source: Age of Ultron #5

“For us, we look at that content and we think about the role that we play in society. We want to be doing the right thing for our users and for our creators,” Wojcicki said. “We also generate revenue from advertisers and if we are serving content that is seen by our advertising community as not benefiting society no advertiser is going to want appear on that. And they’re certainly not going to even want to appear on a different, you know, content that is positive if they think the platform as a whole is not being responsible.”

Wojcicki continued, “So we are generally very aligned. Responsibility is good for our business. And we have over 2 million creators on our platform that we share revenue with. So if we aren’t generating revenue for them then that’s a problem for our creators. They create beautiful and incredible content and we share the majority of revenue with them.”

Source: Age of Ultron #4

Next, she stated, “I think governments can always — our recommendation if governments want to have more control of online speech is to pass laws to have that be very cleanly and clearly defined such that we can implement it.”

“There are times that we see the laws being implemented or being suggested that they’re not necessarily clean or possible for us to cleanly interpret them. And we’ve also seen sometimes there’s laws passed just for the internet as opposed to for all speech,” Wojcicki detailed.

“I do think that’s a dangerous area when we start to get in and say, ‘Oh sure you could say something like this in a magazine or on TV, but you can’t say it on the internet,” she concluded.

Source: Deadpool #48

What do you make of Wojcicki’s recommendations to governments to pass laws to more heavily restrict speech in order for YouTube to implement them?

More About:Censorship